The world-renowned physicist Stephen Hawking was perhaps one of the very few people with Amyotrophic Lateral Sclerosis (ALS) who survived for longer than the usual 10 years or lesser. Luckily, his speech sensor helped him communicate. Intel built the impressive sensor suite specially for him.

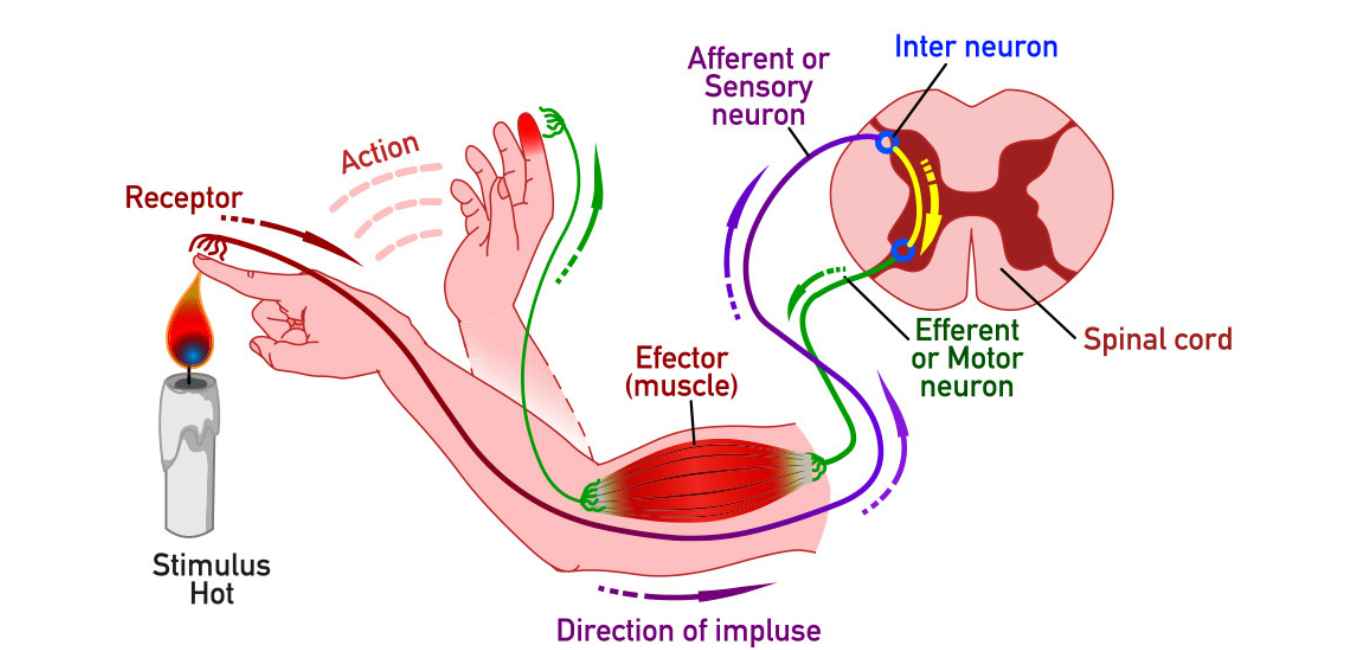

Hawkin’s setup worked by measuring minute muscle movements under his eye as he tried to speak. A more promising route would be to study the brain itself and how it translates signals into speech. A new speech prosthetic developed by researchers at Duke University could offer new hope to those that are unable to communicate due to neurological disorders.

Current speech prosthetics have a huge lag between spoken and decoded speech rate. While existing brain readers are slow and require hours of data, the new setup takes just 90 seconds to decode spoken data through a listen-and-repeat activity during brain surgery.

“I like to compare it to a NASCAR pit crew,” says Gregory B Cogan, professor of neurology at Duke University School of Medicine and one of the lead researchers involved in the project. “We didn’t want to add any extra time to the operating procedure, so we had to be in and out within 15 minutes. As soon as the surgeon and the medical team said ‘Go!’ we rushed into action and the patient performed the task,” Cogan narrates to Happiest Health.

The task was a listen-and-repeat activity. Participants were tasked with listening to a sequence of nonsensical words, such as “ava,” “kug,” or “vip,” and subsequently vocalising each word aloud. The neural activity associated with this speech production was recorded from the speech motor cortex in the brain.

How the brain sensor works

The researchers implanted a postage stamp-sized sensor array of 256 electrodes onto the part of the brain that governs nearly 100 muscles that move the lips, tongue, jaw and larynx. The device then translates brain signals into words which are then read using a decoding machine. While the setup cannot yet decode full sentences, it can pick up certain sounds with accuracy, rendering it a full brain-to-speech decoder in the making.

The speed of the decoding is not quite there, just yet says Jonathan Viventi, Associate Professor of Biomedical Engineering at Duke University, “We’re at the point where it’s still much slower than natural speech but you can see the trajectory where you might be able to get there.”

Cogan believes that by increasing the number of sensors and the size of training data, hey can create better models of how the brain produces speech using AI technology. “This will lead us to rates that are at the speed of normal speech. We hope to be there soon and restore speech in patients who have lost the ability to communicate,” he says.

Challenges with speech prosthetics

The hardware is one of the major challenges with speech prosthetics. Cogan explains, “Standard recording technology that is used to read brain signals is either sparse or only available in a small part of the brain. This limits how much of the brain can be used to convert brain signals into speech.”

The second reason, he says, is the software used to interpret and understand speech signals generated by the brain. “You need good models for how the brain produces speech, and a lot of training data to train these models,” says Cogan adding that this is something he is currently working on. For the sensor, Cogan teamed up with Viventi, who runs a biomedical engineering lab at Duke.

Working with multiple specialists

With the help of the engineers, they designed a device packed with sensors that can pick up activity patterns of neurons involved in coordinating speech.

“The device that we used in our work is unique due to the increase in number and the closeness of these sensors that can read parts of the brain [involved in speech] to one another. Compared to standard recording devices, our micro-ECoG technology can record with 57 times greater spatial resolution and with millimetre precision,” says Cogan.

Once the new implant was made, Cogan enlisted the help of neurosurgeons at Duke University. Together, they tested the implants on four individuals with either Parkinson’s or a brain tumour and were undergoing brain surgery. This meant that Cogan had limited time to get in and test the device while in the operating room.

Once recorded, the neural and speech data was run through a machine learning algorithm to see if it could predict the word or sounds that were made during the test. The decoder was accurate 40 per cent of the time, which is an impressive feat for a brain-to-speech device.

This accuracy rate could be further improved with more training data, according to Cogan. “The incorporation of large language models – like the ones used in Siri and OK Google – to help read brain signals, will also help a great deal in improving performance,” Cogan believes.

Trajectory to develop a speech prosthetic

While the need for more training data is pertinent to improve the implant, there are other challenges that lie ahead. “Designing and manufacturing sensor arrays with such small sensors that are close together is extremely challenging. Getting good quality recordings of the brain can also be challenging, as is recording in the operating room itself,” says Cogan.

As for the future work with regards to the recording device, Cogan hopes to develop one that is wireless allowing them to move around in the OR.

You can also read about:

Swedish scientists grow electrodes in the brain.

Researchers develop new material to fix severed nerves.